Biography

Hi, I am Huanqia Cai, a researcher at Alibaba Tongyi Lab. My research interests lie in multimodal generation and understanding. Currently, I am focused on leveraging reinforcement learning to enhance the visual fidelity of image generation and the complex reasoning capabilities of multimodal models. Specifically, I am responsible for the reinforcement learning alignment for the Z-Image foundation model.

News

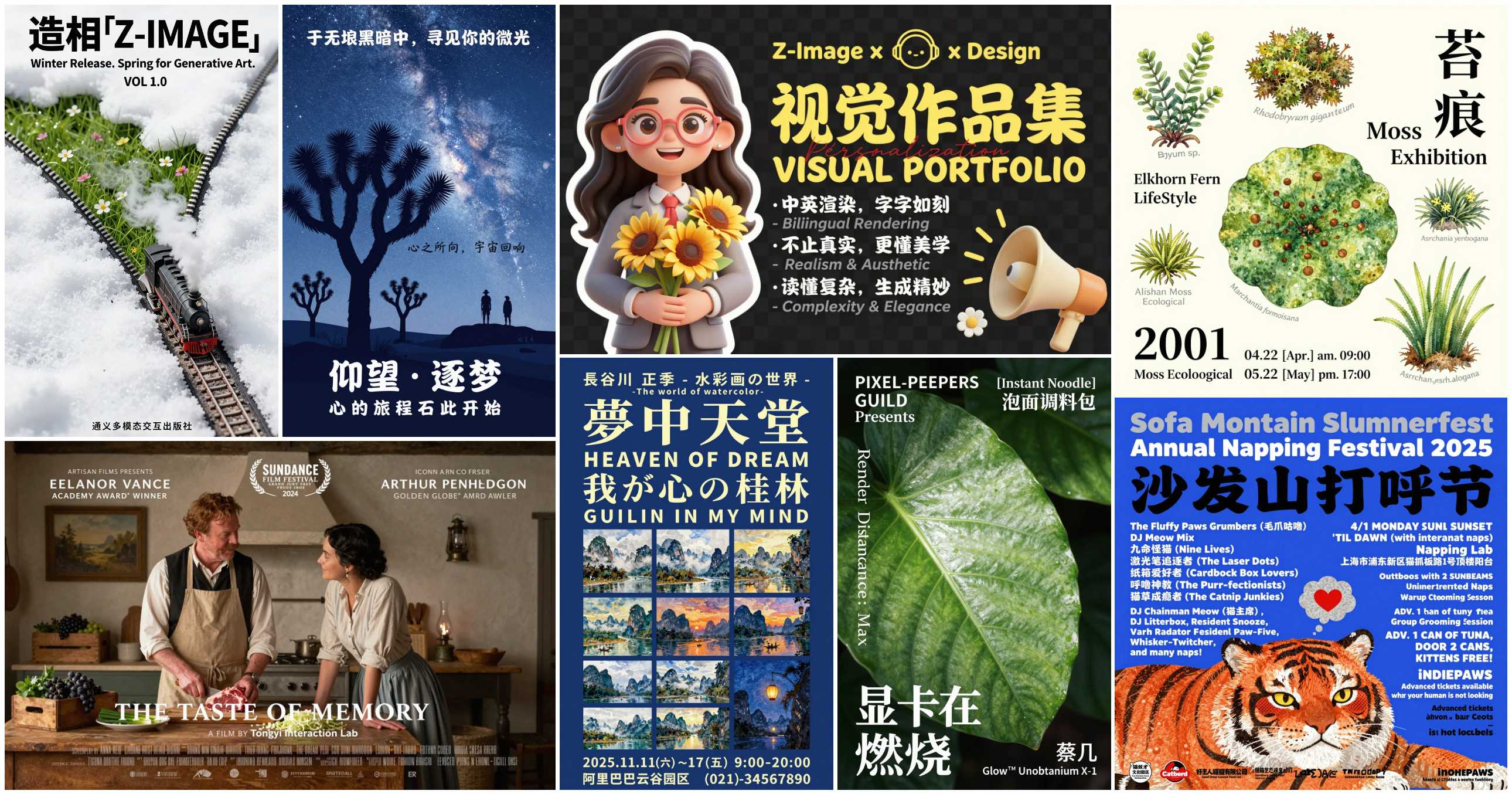

- 2026 Released Z-Image (Base), the high-quality foundation model specializing in rich aesthetics and controllability. The series is now the #2 most popular Chinese model on Hugging Face and ranks 2nd in global Image Generation API usage on fal.ai.

- 2025 Released Z-Image-Turbo, an efficient foundation model specializing in photorealistic image generation.

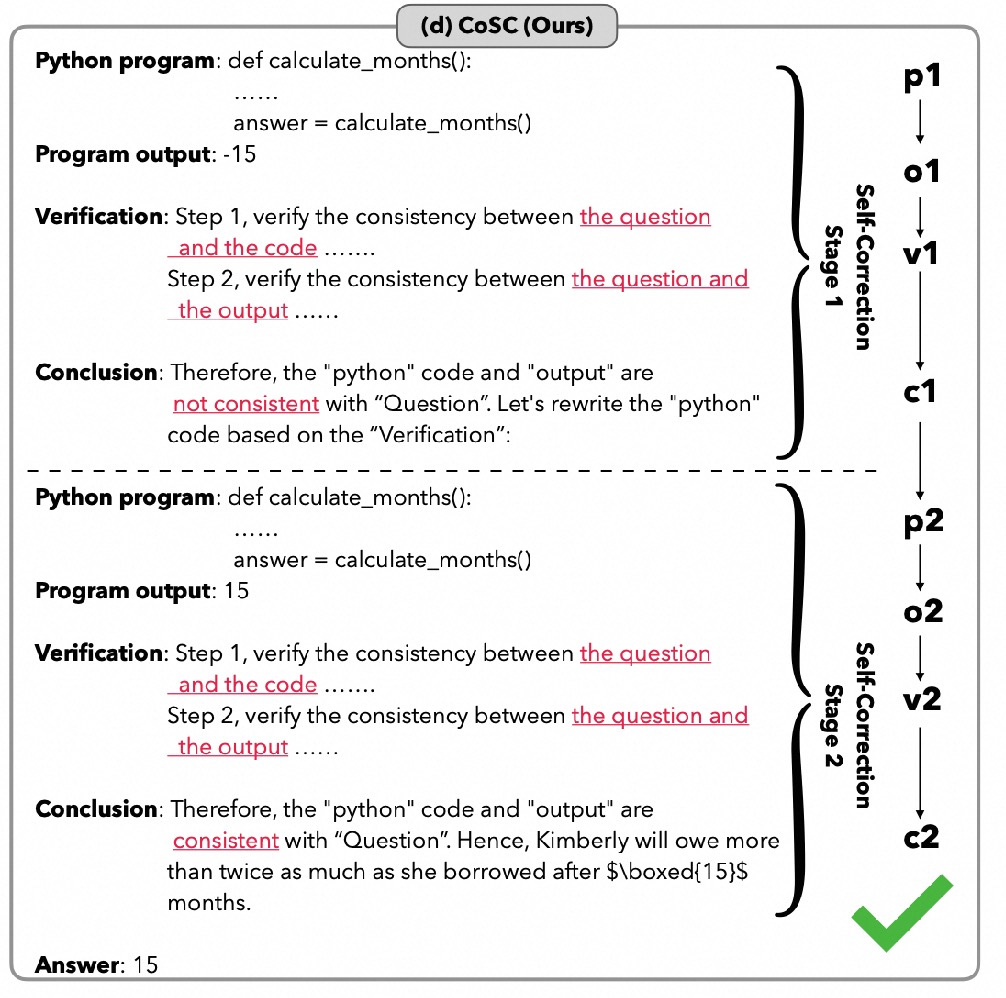

- 2024 New paper on Self-Correction in LLMs released on arXiv.

- 2024 Released MM-IQ, a new benchmark for assessing the core reasoning capabilities of large multimodal models.

- 2024 Paper "System-2 Mathematical Reasoning" accepted to TMLR.

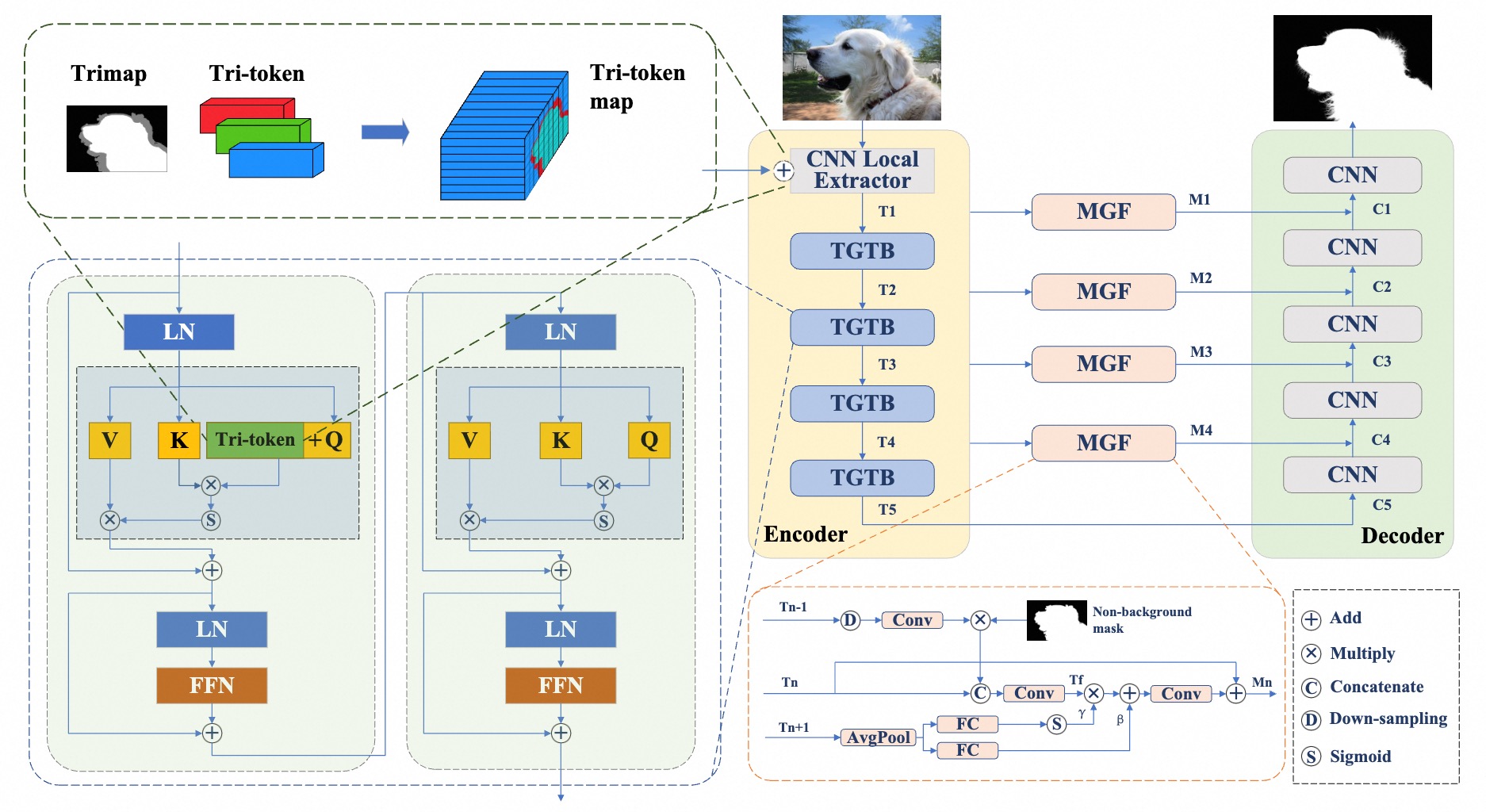

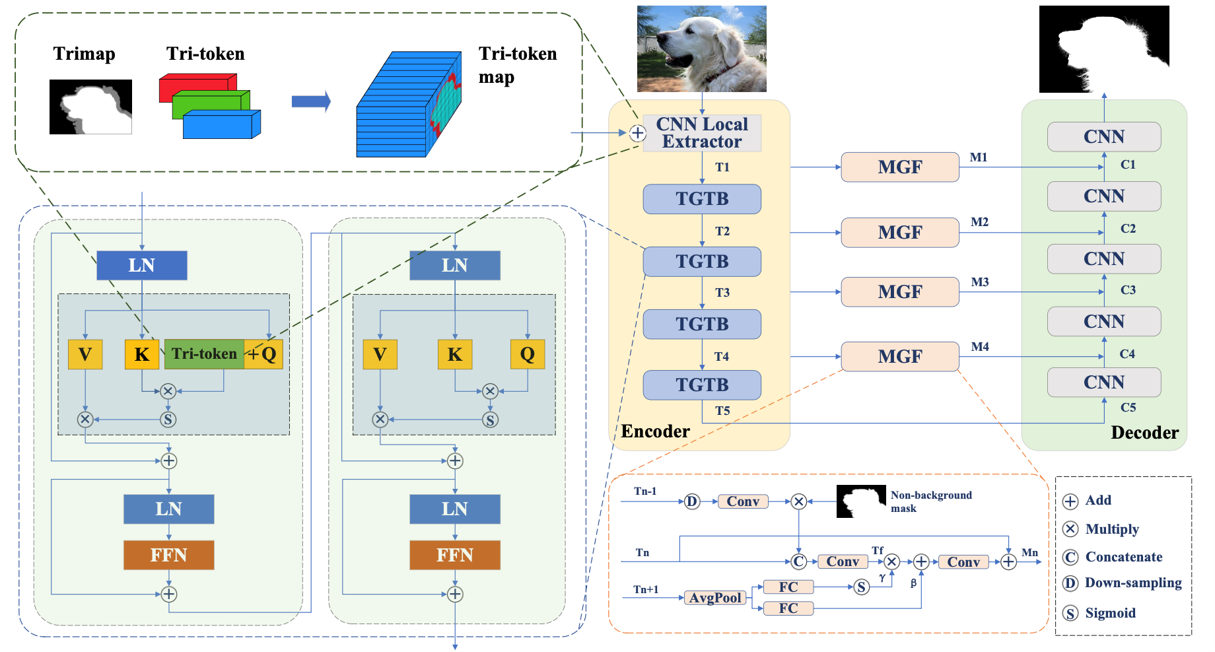

- 2023 The extended paper "Tri-token Equipped Transformer Model for Image Matting" released on arXiv.

- 2022 TransMatting accepted to ECCV.

- 2021 Won the 2nd Place Award in NTIRE 2021 Challenge on Multi-modal Aerial View Object Classification at CVPR 2021.

Publications

Tech Report, 2025

Z-Image is a state-of-the-art foundation model designed for high efficiency.

Community Impact: It is the #2 most popular Chinese model on Hugging Face and ranks 2nd in global API usage on fal.ai (next to Gemini Nano). It excels at generating photorealistic images with superior visual quality while maintaining low computational cost.

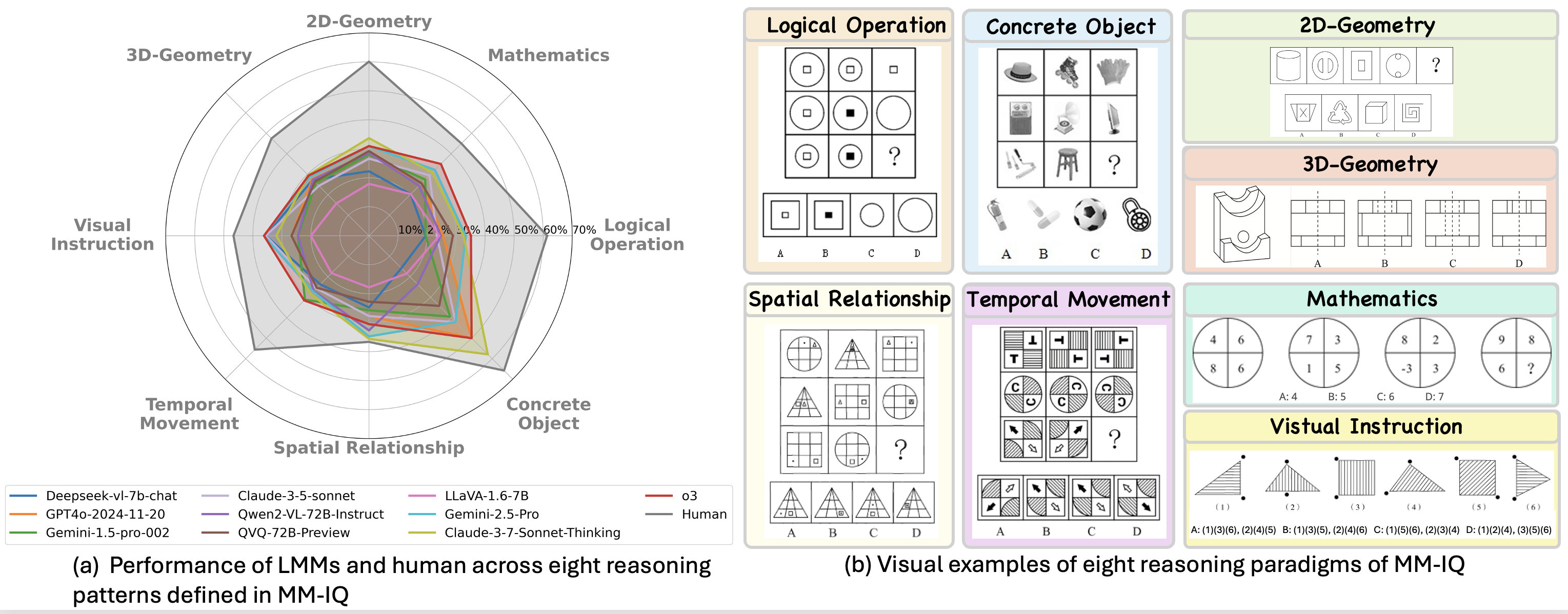

MM-IQ: Benchmarking Human-Like Abstraction and Reasoning in Multimodal Models

arXiv Preprint, 2024

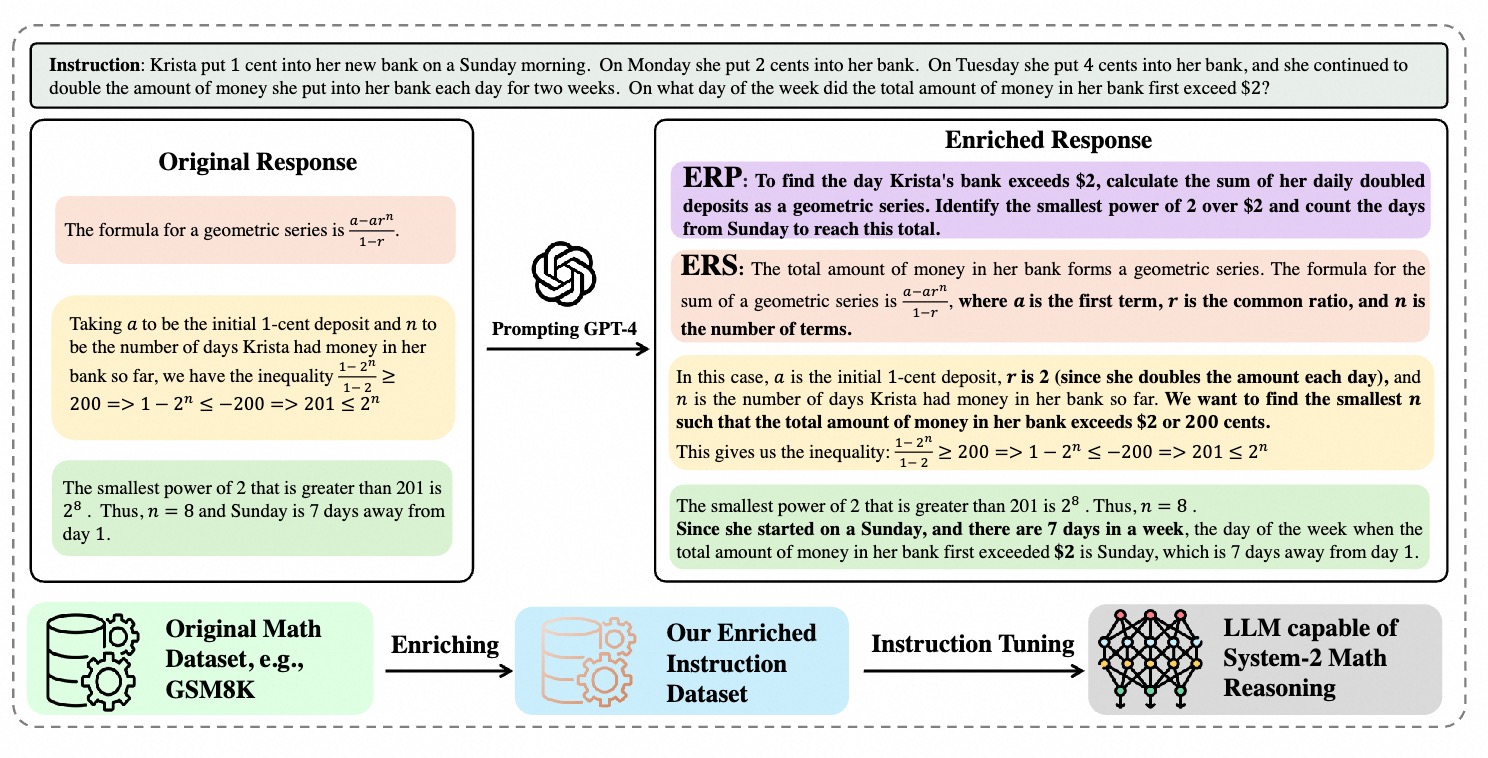

System-2 Mathematical Reasoning via Enriched Instruction Tuning

Transactions on Machine Learning Research (TMLR), 2024

TransMatting: Enhancing Transparent Objects Matting with Transformers

European Conference on Computer Vision (ECCV), 2022